Fly.io is not advertising itself as a Django-first hosting platform.

However, it provides all the building blocks for a sweet Django setup.

tl;dr

It’s always a rollercoaster of emotions to deploy an app somewhere for the first time. I found the caveats in this post by designing and implementing a small but scalable setup for Django.

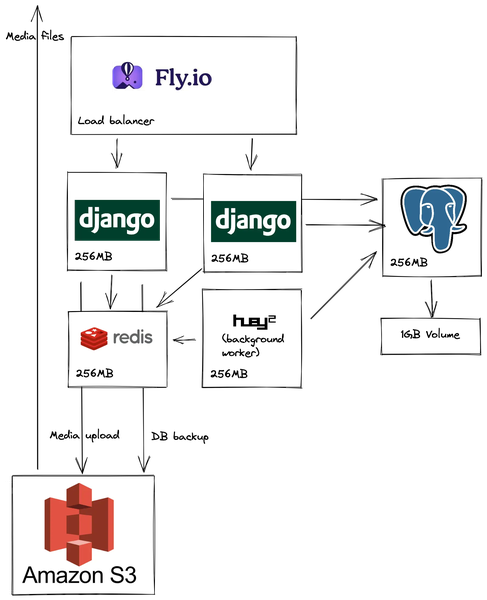

This setup includes

- redundant web workers with zero downtime deployments

- a background worker queue

- a database

- automated backups to S3

- media file handling

- and static file serving.

It costs around $10 per month to run.

Django on 256MB of memory

Django on 256MB of memory

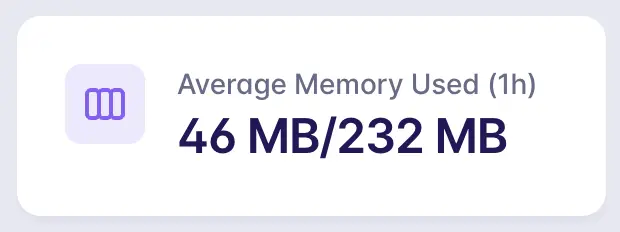

Fly offers 3 instances with 256MB of memory for free. It’s possible to run Django on 256MB with 2 Gunicorn workers and swap enabled.

Without a swap file, workers run out of memory and crash every now and then.

The following lines enable swapping:

# scripts/web.sh

#!/bin/bash

set -e

# Set up swapping

fallocate -l 512M /swapfile

chmod 0600 /swapfile

mkswap /swapfile

echo 10 > /proc/sys/vm/swappiness

swapon /swapfile

gunicorn config.wsgi:application -b 0.0.0.0:8080 --workers=2 --capture-output --enable-stdio-inheritanceA CDN running on Django

Fly’s unique selling proposition is to deploy Docker containers across the world. Let’s see how we can use that to create our own CDN.

File-based cache

With Django’s FileBasedCache, pages can be served from the file system.

By default, Django does not open a connection to the database if the database is not used during a request.

Landing pages and other static pages are served by the instance that is closest to the user.

# config/settings.py

CACHES = {

"default": {

"BACKEND": "django.core.cache.backends.filebased.FileBasedCache",

"LOCATION": "/tmp/cache",

}

}Static files

Fly can serve static files from within the VM. No NGINX or whitenoise is needed.

The Docker images are built on fly’s remote docker image builders. Static files need to be collected and compressed during the image build step.

# Secrets and env vars are not available at build time

ARG DJANGO_SETTINGS_MODULE="config.settings.production"

ARG DJANGO_SECRET_KEY="for building purposes"

ARG DJANGO_ADMIN_URL="for building purposes"

ARG MAILJET_API_KEY="for building purposes"

ARG MAILJET_SECRET_KEY="for building purposes"

ARG DATABASE_URL="for building purposes"

ARG REDIS_URL="redis://forbuildingpurposes"

ARG AWS_ACCESS_KEY_ID="for building purposes"

ARG AWS_SECRET_ACCESS_KEY="for building purposes"

ARG GOOGLE_API_KEY="for building purposes"

RUN python manage.py tailwind installcli

RUN python manage.py tailwind build

RUN python manage.py collectstatic --noinput

RUN python manage.py compress --forceRuntime secrets are not available at build time. Dummy values bypass environment variable validation.

Some packages validate the content of the secrets as well. Make sure to set values that pass these validations.

# config/settings.py

STATIC_ROOT = "/app/static"# fly.toml

[[statics]]

guest_path = "/app/static"

url_prefix = "/static"With the file system cache and fly’s static file serving, Django serves pages without talking to any other service.

Deploy Django VMs to multiple regions as close as possible to your users.

Redis

Redis is used mainly as a message broker in this setup. Instead of using the hosted Redis offering of Upstash, I decided to host it on a 256MB instance myself.

It’s sitting on about 50MB of memory usage while idling.

This is the repo of the Redis app. It’s a separate project because various apps are using it as a message broker.

This is the repo of the Redis app. It’s a separate project because various apps are using it as a message broker.

If your redis app is called redis-app, set the REDIS_URL to redis://default:password@redis-app.internal:6379/0.

Database backups

Fly comes with built-in database backups. I prefer to keep backups on S3.

dbbackup and huey enable painless and reliable backups.

The following task creates a daily compressed backup at 3am:

from django.core.management import call_command

from huey import crontab

from huey.contrib.djhuey import lock_task, periodic_task

@periodic_task(crontab(minute="0", hour="3"))

@lock_task("backup-lock")

def backup_db():

call_command("dbbackup", "--compress", "--clean", "--noinput")dbbackup can use django-storages to upload backups to S3.

from storages.backends.s3boto3 import S3Boto3Storage # type: ignore

class BackupStorage(S3Boto3Storage):

bucket_name = "performance90"

location = "backup"DBBACKUP_STORAGE = "<yourapp>.contrib.storages.storages.BackupStorage"

CONNECTOR = "dbbackup.db.postgresql.PgDumpBinaryConnector"The Django command dbbackup call spg_dump to dump the database. pg_dump needs to have the same version as the Postgres server.

The python:3.10.6-slim Docker image installs Postgres 13 and fly provisions Postgres 14 (at the time of writing).

Install pg_dump version 14 on Debian (which the Django Dockerfile is based on):

RUN apt-get update && apt-get install -y sudo gnupg ca-certificates wget && \

# Allow Postgres 14

sudo sh -c 'echo "deb http://apt.postgresql.org/pub/repos/apt bullseye-pgdg main" > /etc/apt/sources.list.d/pgdg.list' && \

wget --quiet -O - https://www.postgresql.org/media/keys/ACCC4CF8.asc | sudo apt-key add - && \

apt update && \

apt install -y --no-install-recommends build-essential libpq-dev postgresql-client-14 && \

rm -rf /var/lib/apt/lists/*Health checks

Fly is using health checks to decide when to restart VMS or where to forward requests. django-health-check works well to signal the health of a Django web worker VM.

# config/settings.py

INSTALLED_APPS = [

...

"health_check",

"health_check.db",

"health_check.cache",

"health_check.storage",

"health_check.contrib.migrations",

"health_check.contrib.psutil",

"health_check.contrib.redis",

...

]

HEALTH_CHECK = {

"DISK_USAGE_MAX": 90, # percent

"MEMORY_MIN": 5, # in MB

}Define a path /ht/ as health check endpoint.

urlpatterns = [

...

# Health check

path("ht/", include("health_check.urls")),

...

]Tell fly about the endpoint:

# fly.toml

[[services.http_checks]]

grace_period = "5s"

interval = 10000

method = "get"

path = "/ht/"

protocol = "http"

restart_limit = 0

timeout = 5000

tls_skip_verify = false

[services.http_checks.headers]

Host = "health.check"The health check fails unless the Host header is added to ALLOWED_HOST.

FLY_HOSTNAME = f'{os.getenv("FLY_APP_NAME")}.fly.dev'

ALLOWED_HOSTS = env.list(

"DJANGO_ALLOWED_HOSTS",

default=[FLY_HOSTNAME, "www.example.com", "health.check"],

)Multiple processes

It’s possible to multiple processes using the same Dockerfile.

Use multiple processes to run web workers and background workers:

# fly.toml

app = "app-name"

kill_signal = "SIGINT"

kill_timeout = 5

processes = []

[deploy]

release_command = "python manage.py migrate"

[processes]

web = "sh /app/scripts/web.sh"

worker = "sh /app/scripts/worker.sh"

[[services]]

processes = ["web"]

internal_port = 8080Process groups scale independently. To deploy two web workers across two regions, one per region:

$ fly scale count web=2 --max-per-region 1Fly creates a release when the release_command succeeds. It’s the ideal hook to run migrations.

Final words

This is the list caveats that I found while moving a Django app from dokku to fly.

After a couple of weeks on fly, I stand by the title: Django + fly.io = ❤️

If you need help with Django, don’t hesitate to reach out 😌